AI Ethics

OpenAI Robotics Chief Resigns Over AI Military and Surveillance Concerns

A senior robotics executive at OpenAI has resigned following controversy surrounding the company’s agreement with the U.S. government to allow the potential use of its artificial intelligence technology in defense-related applications. Caitlin Kalinowski announced her departure after raising concerns about the possible deployment of AI systems in warfare and domestic surveillance operations. The resignation comes shortly after OpenAI secured a contract with the United States Department of Defense.

Kalinowski said the decision was driven by principles rather than disagreements with colleagues, emphasizing the need for stronger safeguards before deploying advanced AI technologies in sensitive areas.

Concerns Over AI Use in Warfare and Surveillance

In public statements, Caitlin Kalinowski expressed concerns about the risks of deploying artificial intelligence in military and surveillance contexts.

She highlighted two issues in particular:

-

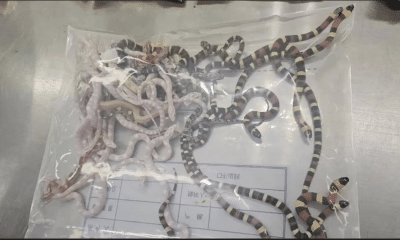

Mass surveillance of citizens without judicial oversight

-

Autonomous weapons systems operating without human authorization

According to Caitlin Kalinowski, these potential uses of AI require careful ethical consideration and robust governance frameworks before any large-scale implementation.

She also said that the agreement appeared to move forward too quickly without clearly defined safety guardrails.

OpenAI Moves to Clarify Contract Terms

Following criticism surrounding the deal, OpenAI leadership said it would revise aspects of the contract to ensure stronger protections.

Sam Altman stated that the company intends to prevent its AI models from being used for domestic surveillance of U.S. citizens. The company also reiterated that it opposes the use of fully autonomous weapons powered by its technology.

OpenAI officials emphasized that the agreement with the Pentagon aims to support responsible national security applications while maintaining ethical boundaries for AI deployment.

A spokesperson said the company is committed to ongoing dialogue with policymakers, employees, and civil society organizations about how artificial intelligence should be used in defense and public sector environments.

Rival AI Firms Take Different Approaches

The controversy emerged shortly after another leading AI company, Anthropic, declined to approve unrestricted military use of its AI systems.

Anthropic reportedly sought stronger safeguards before allowing its models to be used in defense environments. Negotiations ultimately fell through, after which the Pentagon moved forward with OpenAI instead.

The differing approaches highlight a growing debate within the AI industry over how emerging technologies should be used in national security contexts.

Rising Debate Over AI Governance

Kalinowski previously worked at Meta, where she led the development of augmented reality hardware. She joined OpenAI in 2024 to oversee hardware and robotics initiatives.

Her resignation reflects broader tensions across the technology sector as governments increasingly seek to deploy artificial intelligence in defense and intelligence operations.

Experts say the debate over AI governance is likely to intensify as the technology becomes more powerful and widely adopted.

The Growing Ethical Challenge of AI

As AI continues to transform industries ranging from healthcare to national security, technology companies face mounting pressure to balance innovation with responsible oversight.

Caitlin Kalinowski’s departure underscores the ethical questions that remain unresolved around military AI, surveillance systems, and automated decision-making technologies.

For many observers, the incident highlights the urgent need for clearer global rules governing the use of artificial intelligence in high-risk environments.